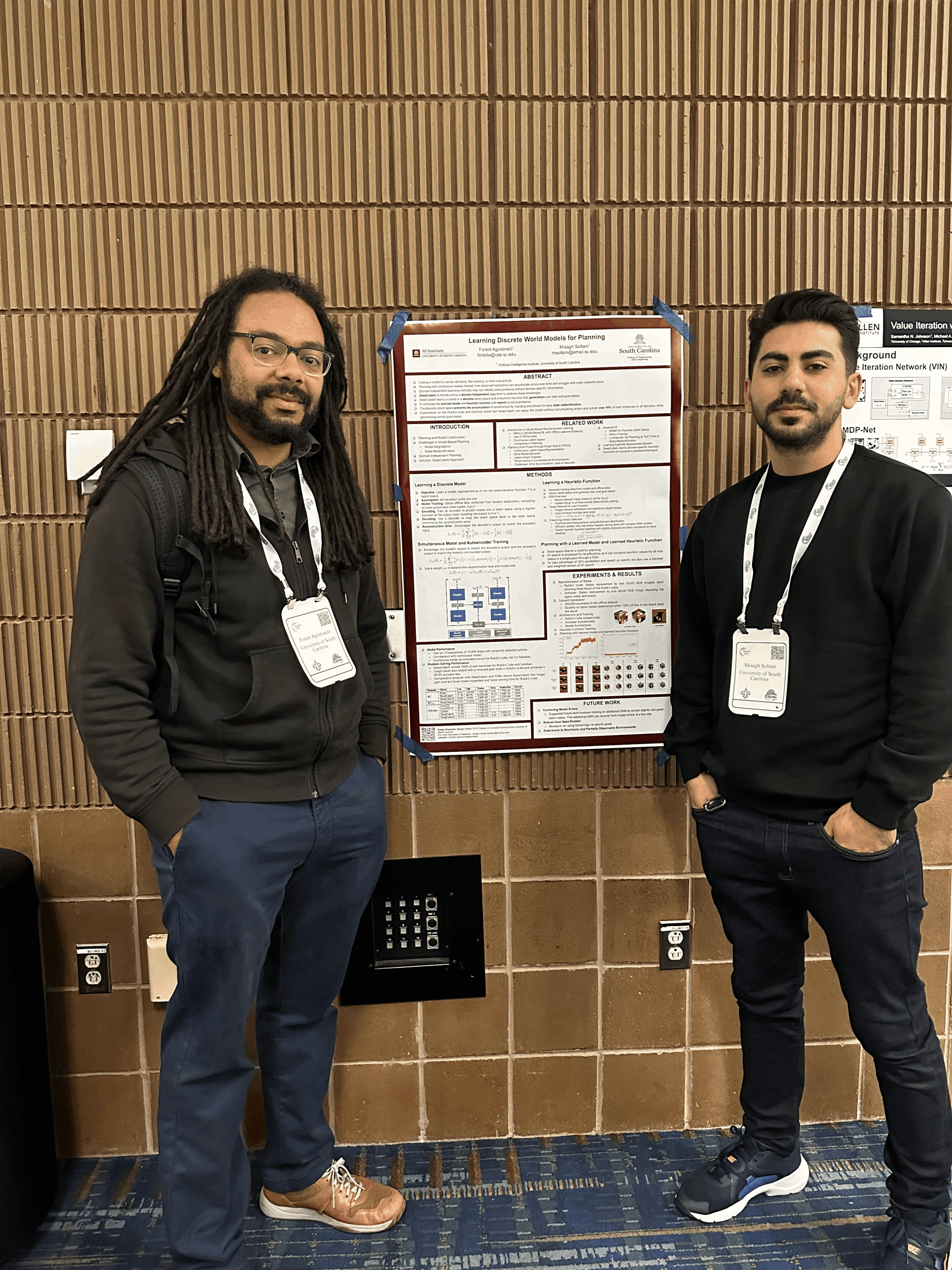

Forest Agostinelli

Assistant Professor - AI Institute - Computer Science and Engineering

University of South Carolina

Email: foresta@cse.sc.edu

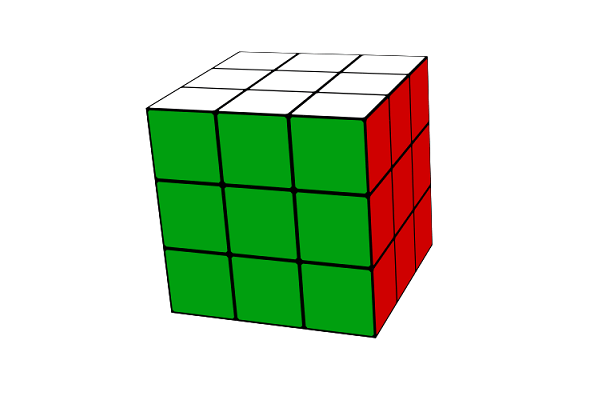

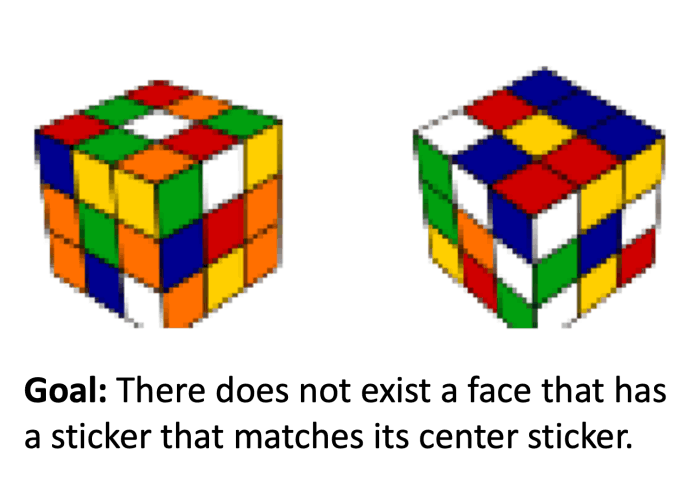

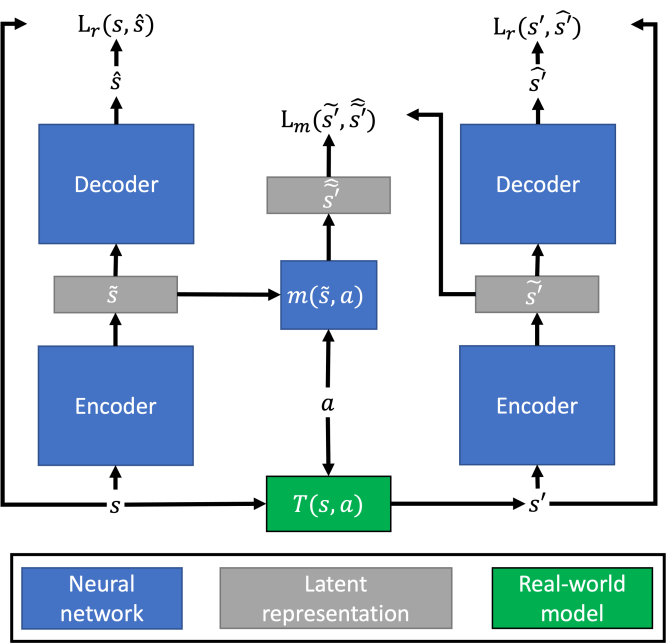

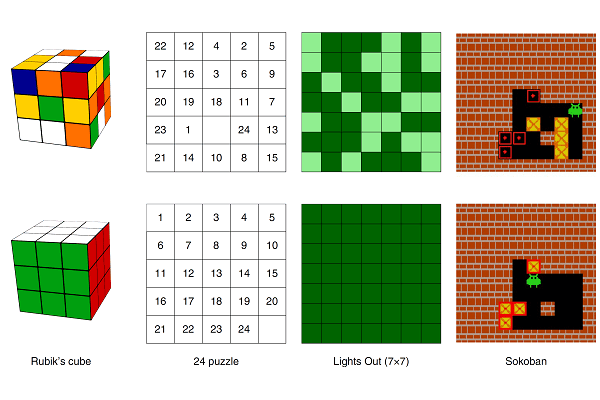

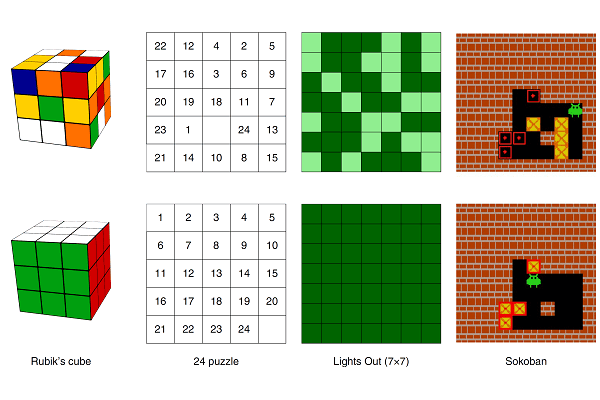

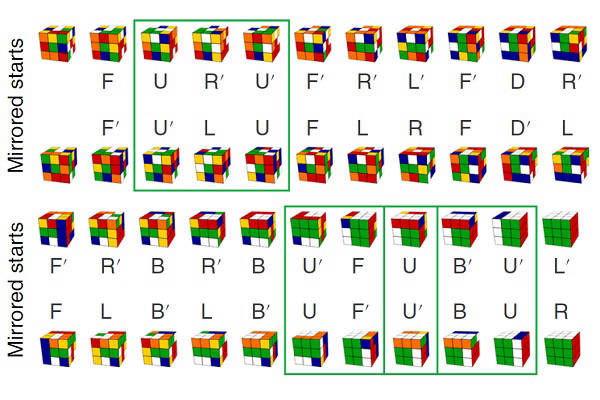

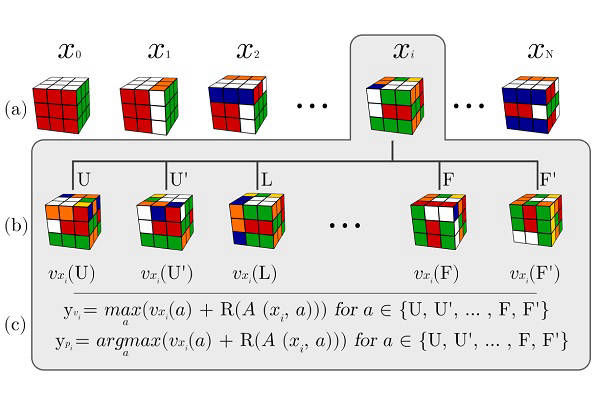

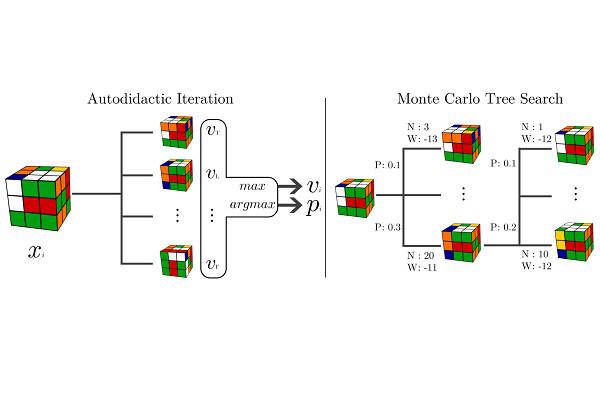

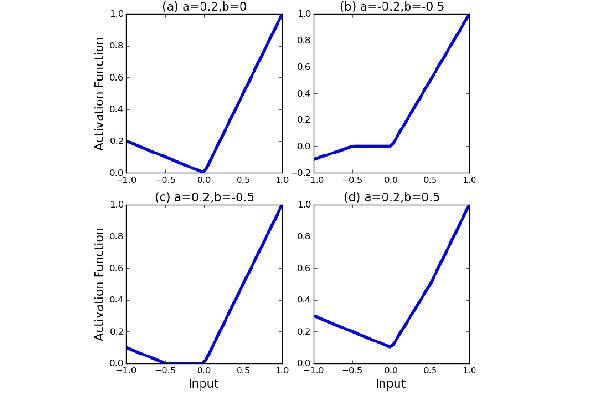

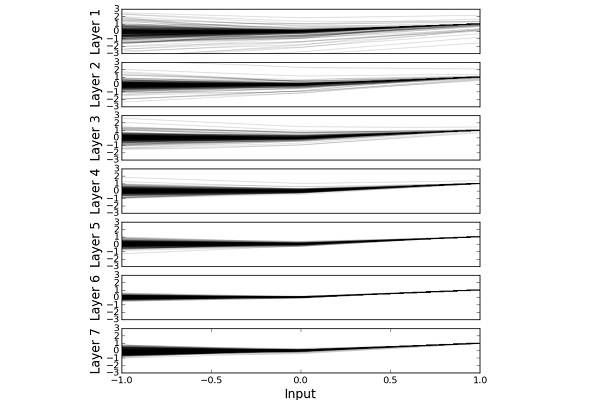

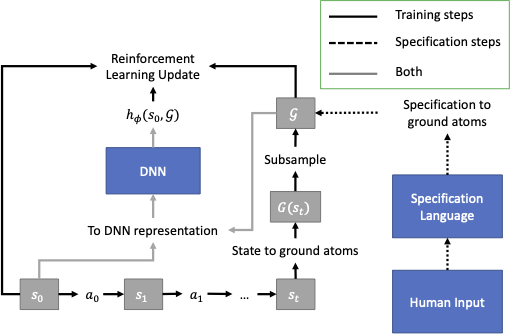

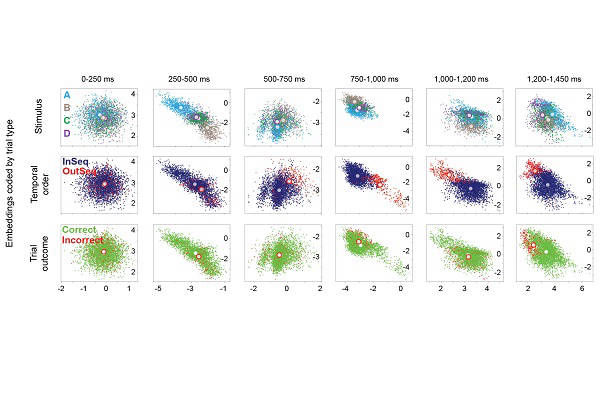

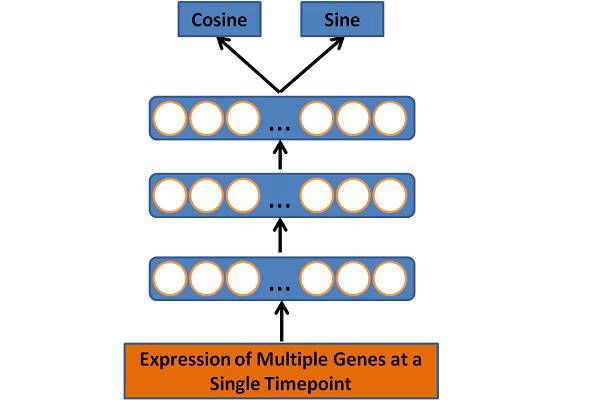

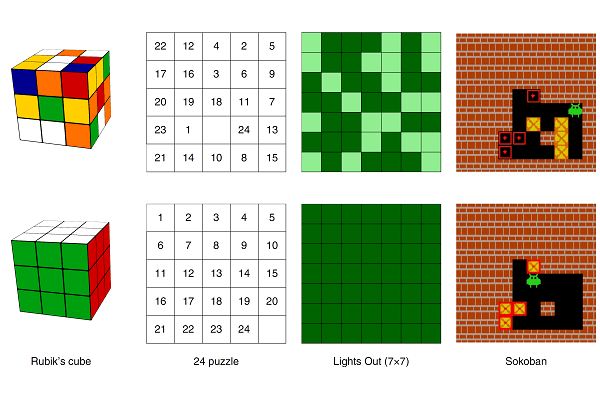

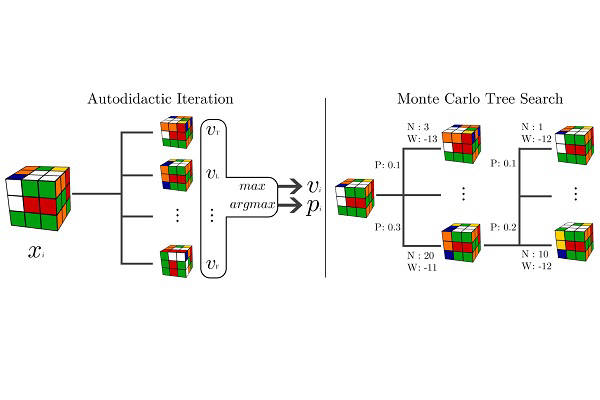

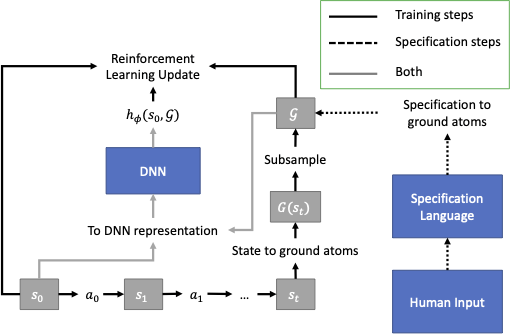

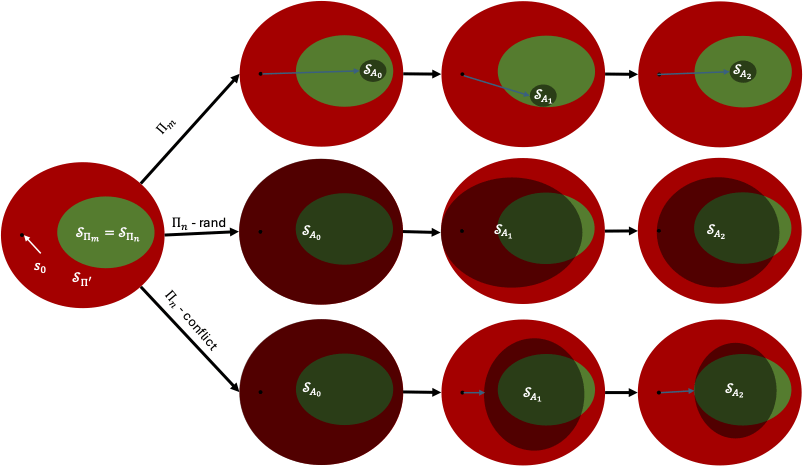

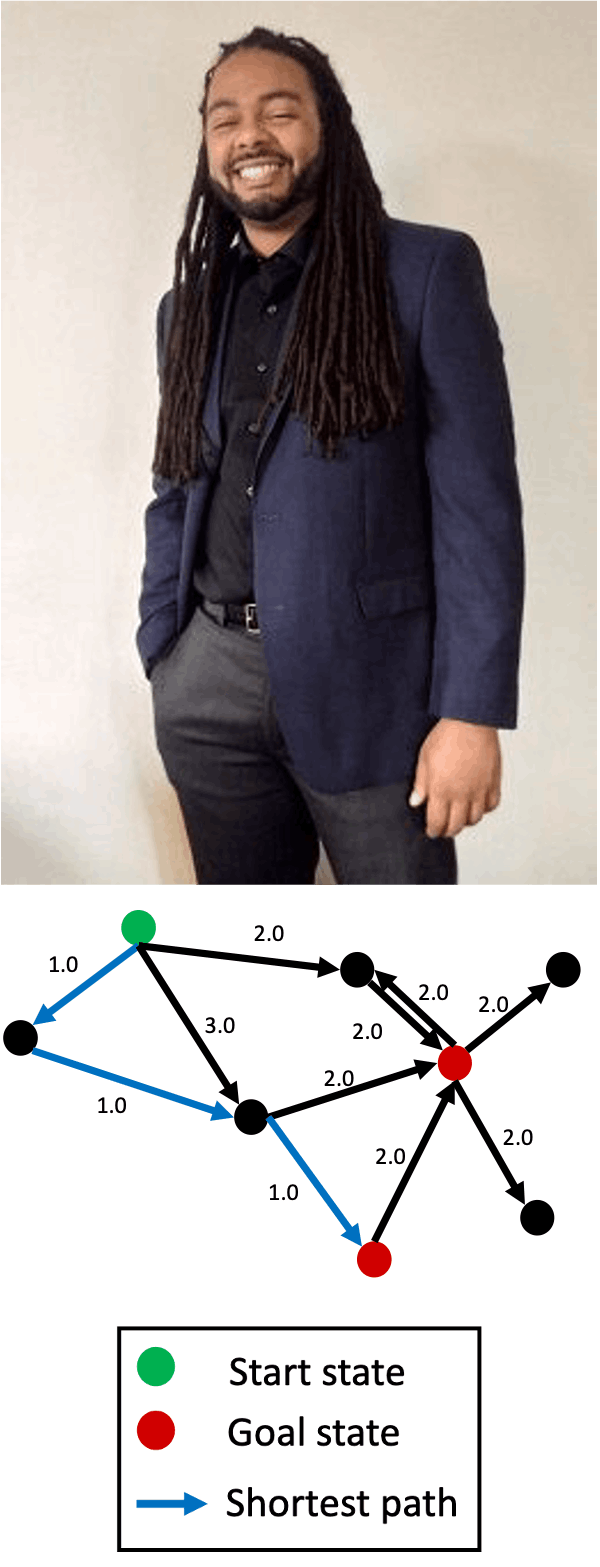

I am an assistant professor at the University of South Carolina. My research goal is to create artificial intelligence (AI) algorithms that can solve any pathfinding problem, where the objective of a pathfinding problem is to find a sequence of actions that forms a path from a given start state to a given goal. The methods employed in my research group include deep learning, reinforcement learning, heuristic search, and formal logic.

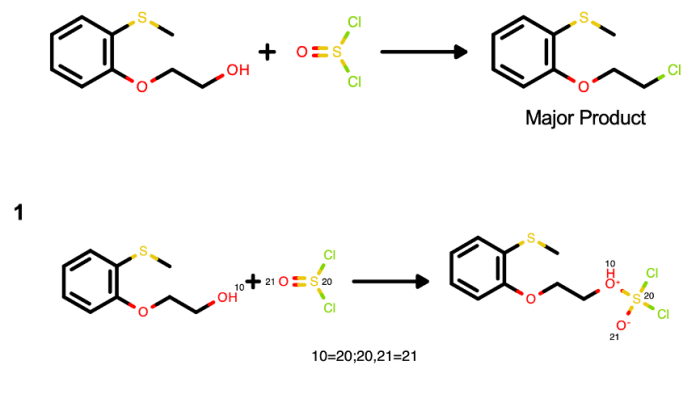

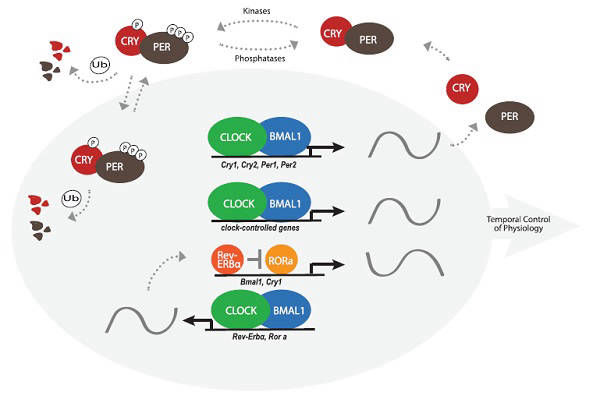

Pathfinding problems include robot path planning, theorem proving, chemical synthesis, program synthesis, and quantum circuit synthesis. Automating finding solutions to these problems with AI can result in rapid advancement of these fields. Furthermore, my research group seeks to incorporate explainable AI to enable collaboration with humans and to automate the discovery of new knowledge.

More broadly, it is my opinion that AI is, at its core, the study of algorithms that write algorithms (i.e. meta-algorithms). Since writing an algorithm can be posed as a pathfinding problem, I believe that solving pathfinding is key to solving AI.

I earned my Ph.D. in computer science at the University of California, Irvine, my M.S. in computer science at the University of Michigan, and my B.S. in electrical and computer engineering at The Ohio State University.